This article is part of a series examining how the field’s leading governance frameworks address identity, trust, and control for AIgentic systems. The central thesis, established in Governing AIgentic Actors: Identity, Trust and Control, is that the governance problem in AIgentic systems is not solved by verifying Actors more carefully; it is dissolved by building environments where the scope of what an Actor can do is constrained before any verification occurs. Each article in this series evaluates one framework against that thesis: what it contributes, where its verification posture reaches a structural limit, and what a security leader must add to close it.

The Cloud Security Alliance published the Agentic Trust Framework in February 2026. ATF is the most operationally complete external specification for AIgentic governance currently available. It applies Zero Trust principles to AI agents across five domains and introduces a maturity model that ties agent autonomy to demonstrated governance readiness.1 These are genuine contributions to a field where most published frameworks are either taxonomic or aspirational. This article evaluates ATF precisely: what it establishes, where its verification posture reaches a structural limit, and what a security leader must add to make it operational.

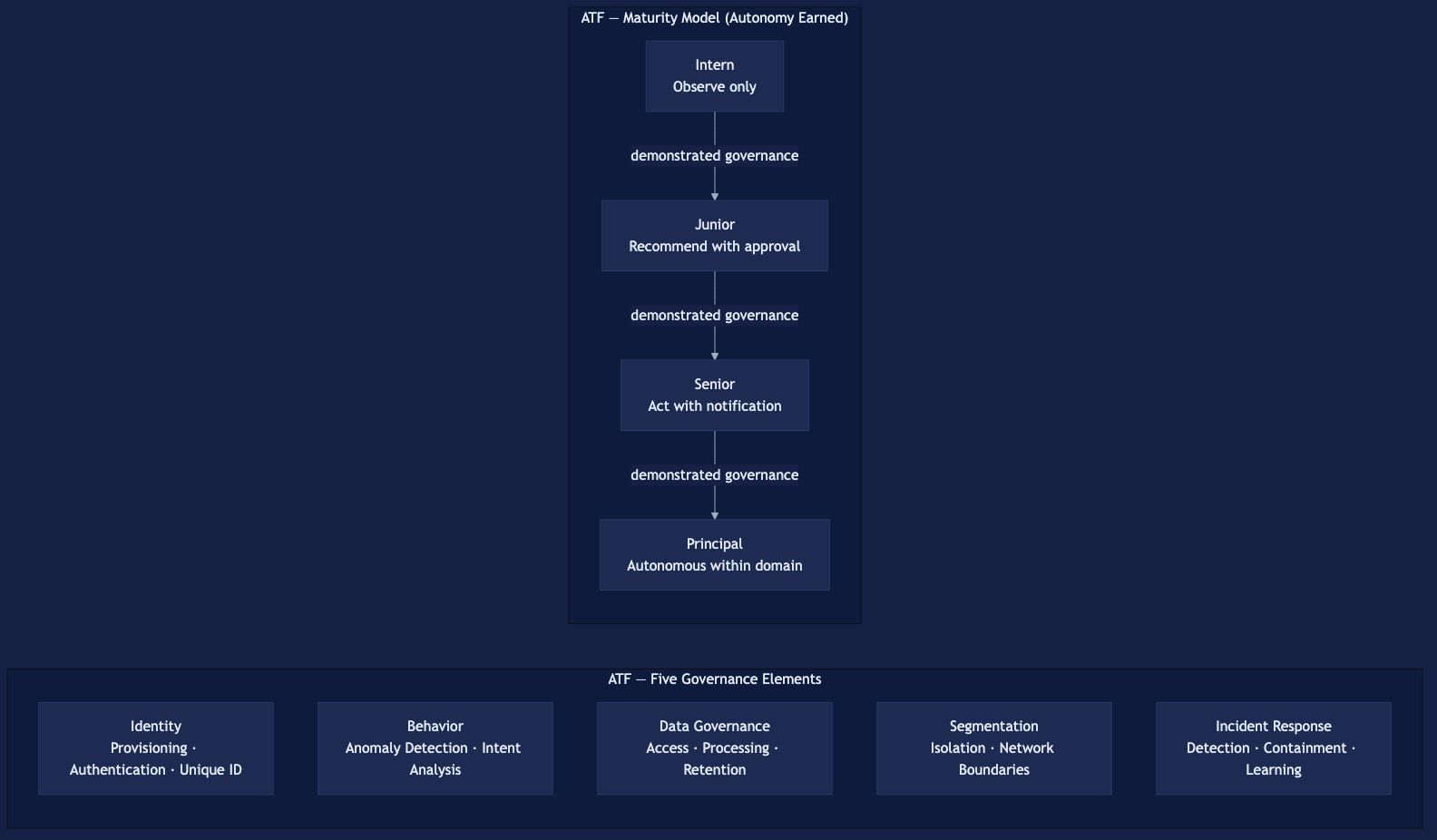

The Agentic Trust Framework organizes governance across five elements.

Identity addresses who the agent is: provisioning, authentication, and unique identifier assignment. Behavior addresses what the agent does: anomaly detection, intent analysis, and deviation monitoring. Data Governance addresses what data the agent accesses, processes, and retains. Segmentation addresses how agents are isolated from each other and from human-access infrastructure. Incident Response addresses how the organization detects, contains, and learns from agent-related security events.

ATF also defines a four-tier maturity model: Intern, Junior, Senior, and Principal. Autonomy is earned, not granted by default.1 An Intern-level agent operates under close supervision with a narrowly bounded action space. A Principal-level agent operates with delegated authority commensurate with demonstrated governance maturity. The model is a governance staging mechanism. It ties agent capability to the organization’s capacity to govern it.

What does the Agentic Trust Framework establish?

ATF is Zero Trust applied to AI agents. Zero Trust’s core principle, never trust, always verify, extends across five governance elements with a maturity dimension added. That framing is precise and operationally useful.

ATF makes two contributions the field needed. The maturity model provides a staging mechanism: autonomy is earned, not granted by default.1 A security team can place a given agent population on the Intern-to-Principal spectrum. Governance then follows from that placement. The Segmentation element acknowledges network isolation as a governance domain, not merely an infrastructure concern. Both contributions are operationally meaningful. Neither appears in most of the other published frameworks.

ATF does not specify two things. It does not prescribe the mechanism by which cryptographic delegation is encoded and verified between agents. It does not prescribe an enforcement substrate that makes the Segmentation element mandatory rather than configurable. Those are implementation decisions the framework leaves to the practitioner. Identifying them is the starting point for making ATF operational.

Where does the verification posture reach its structural limit?

ATF’s trust mechanism in the Behavior element is behavioral verification: anomaly detection and intent analysis applied to agent actions.1 That is a monitoring posture. It detects deviation after the agent has already acted.

For non-deterministic actors, this creates a specific structural problem. An AIgentic Actor’s behavioral state is a function of its current context window. That window includes the system prompt, conversation history, tool outputs ingested, and any semantic content injected mid-task. The Actor provisioned correctly at spawn holds the same credential three tool-calls later. Its operational state may be fundamentally different.

A prompt-injected Actor presents a valid credential while executing attacker instructions. The credential is authentic. The behavior is not. Probabilistic behavioral detection applied to a non-deterministic actor produces a confidence interval, not a guarantee. Confidence intervals are not an audit trail.

This is not a critique of ATF’s implementation. It is a structural property of the verification paradigm itself. Zero Trust makes verification more rigorous and continuous. However, more rigorous verification does not resolve the underlying problem when the entity being verified can be semantically altered mid-execution.

The architectural alternative addresses this at the topology layer. An AIgentic Actor inside a subnet with deny-all egress traverses only the semantic proxy.2 It cannot reach human-access infrastructure regardless of its credential state or model-layer compromise. The proxy is out-of-band. It is invisible to the primary Actor and unreachable by model-layer manipulation. A prompt-injected Actor cannot disable the proxy because it cannot perceive the proxy’s existence. The security control operates outside the attack surface of the entity being attacked.

This is the precise distinction between Zero Trust and a topology-first architectural design pattern. Zero Trust makes the verification question more rigorous. A topology-first architectural design pattern makes the verification question structurally irrelevant to the safety outcome. Inside a bounded environment, the Actor’s trustworthiness does not determine what it can reach. The topology determines that. Verification determines attribution. The Semantic Proxy Pattern presents this architectural design pattern in full.

ATF’s Segmentation element acknowledges network isolation as a governance domain. However, it does not specify an enforcement substrate that makes isolation mandatory rather than configurable. That is the boundary where ATF’s contribution ends and implementation guidance must begin.

What does a security leader need to add to make ATF operational?

ATF provides a framework. Making it operational requires four additions the specification does not prescribe. The scale of the problem that ATF addresses is measurable: only 23% of organizations have a formal, enterprise-wide strategy for agent identity management, and only 18% express high confidence that their current IAM can handle agent identities at all.3

The first addition is a topological enforcement substrate. Deny-all egress with mandatory proxy traversal makes ATF’s Segmentation element hard rather than configurable. An AIgentic Actor cannot route around a subnet it cannot perceive. This is an infrastructure decision made at deployment. Organizations that skip it at deployment will not retrofit it cleanly into a production agentic environment.

The second addition is per-Actor cryptographic delegation between agents. ATF’s Identity element addresses agent authentication. It does not specify how delegation chains are encoded and verified between principals. The IETF OAuth On-Behalf-Of draft introduces the act claim and requested_actor parameter to carry delegation semantics in the token itself.4 That mechanism fills a gap ATF leaves to the implementer.

The third addition is the Actor Identity Lifecycle applied as an operational discipline. ATF’s maturity model defines what an agent tier looks like. The Actor Identity Lifecycle defines the governance acts that move an agent through those tiers. Those acts are: provisioning, scoping, delegation, audit, and revocation. Without the lifecycle, the maturity model is a classification. With the lifecycle, it is an operational checklist.

The fourth addition is explicit governance of Agentlets. ATF’s maturity model governs agents as discrete principals. It does not address Agentlets as a governed class. In a production multi-agent environment, an orchestrator at Principal maturity may spawn dozens of Agentlets. None of them will have been evaluated against any ATF maturity tier. Governing the AIgentic Actor population requires governance at the Actor class level, not the instance level.

ATF identifies these as implementation decisions. That framing is correct. A framework cannot prescribe every architectural choice. For a security leader, the question is where the framework ends and implementation begins. That boundary is the difference between a governance program and a governance label.

Does the Agentic Trust Framework require certification or accreditation?

Not yet. ATF is an open governance specification with no currently operational certification program. MassiveScale.AI, the framework’s creator, has announced an ATF Verified assessment and an ATF Certified third-party audit program, both currently in waitlist stage.5 Organizations can adopt ATF’s principles now and implement them against their own environment and tooling. Certification infrastructure is in development and not yet assessable.

How does ATF relate to the NIST AI Agent Standards Initiative?

NIST launched the AI Agent Standards Initiative in February 2026.6 Its associated concept paper identifies four technical focus areas: identification, authorization, access delegation, and audit logging. ATF and NIST are not competing frameworks. They operate on different timescales. ATF is a published specification a security team can act on now. NIST’s initiative is developing standards infrastructure that will take multiple years to reach normative guidance. The practical approach: treat ATF as the actionable framework for the current fiscal year. Treat NIST’s output as the direction the standards landscape will take over three to five years.

Can ATF’s maturity model govern Agentlets as well as orchestrator-level agents?

Not directly. ATF’s maturity model evaluates agents as discrete principals: Intern (observe only), Junior (recommend with approval), Senior (act with notification), and Principal (autonomous within domain). Agentlets are spawned sub-agents that may exist for seconds and complete a single task. ATF’s maturity model does not define Agentlets as a governed class. This is a gap for security teams deploying multi-agent orchestration. Agentlets are the most numerous class of AIgentic Actor in a production agentic environment. They are also the class least likely to have been evaluated against any maturity tier. Governing them requires applying ATF’s principles at the class definition level. The class definition specifies what kind of Agentlet is permitted to exist, with what scope, and for how long. The Actor Identity Lifecycle extends ATF’s maturity model to cover the full Actor population that way.

Footnotes

-

Cloud Security Alliance. “The Agentic Trust Framework: Zero Trust Governance for AI Agents.” February 2026. https://cloudsecurityalliance.org/blog/2026/02/02/the-agentic-trust-framework-zero-trust-governance-for-ai-agents ↩ ↩2 ↩3 ↩4

-

Attribit-ID. “The Semantic Proxy Pattern.” https://attribit-id.com/writing/semantic-proxy-pattern ↩

-

Strata Identity, “Securing Autonomous AI Agents,” Cloud Security Alliance, 2025. Survey of 285 IT and security professionals, conducted September–October 2025. Summary at https://www.strata.io/blog/agentic-identity/the-ai-agent-identity-crisis-new-research-reveals-a-governance-gap/ ↩

-

IETF. “OAuth 2.0 Extension: On-Behalf-Of User Authorization for AI Agents.” Individual Internet-Draft, version 01; no formal IETF working group or standards-track standing. https://www.ietf.org/archive/id/draft-oauth-ai-agents-on-behalf-of-user-01.html ↩

-

MassiveScale.AI. “ATF Verified.” https://verifiedagents.ai ↩

-

NIST. “Announcing the AI Agent Standards Initiative for Interoperable and Secure AI Agents.” February 2026. https://www.nist.gov/news-events/news/2026/02/announcing-ai-agent-standards-initiative-interoperable-and-secure ↩