Every major governance framework for AIgentic systems is organized around verification: authenticate the agent, monitor its behavior, tighten policy enforcement at runtime. NIST, the Cloud Security Alliance, the IETF, and the Coalition for Secure AI have each built substantial work on this posture. That convergence is a signal the problem is real. It is not a signal the posture is complete. The governance problem in AIgentic systems is not solved by verifying actors more carefully. It is dissolved by building environments where the question of trust does not arise. Executing a governance operation, the Actor Identity Lifecycle, makes accountability recoverable when something goes wrong.

The governance framework this article presents has three components.

The Actor Identity Lifecycle is the operational discipline of provisioning, scoping, delegation, audit, and revocation applied to every AIgentic Actor in the environment. This is the governance act. Controls are expressions of lifecycle decisions. They are not substitutes for them.

Non-human identity governance applies full IAM discipline to AIgentic Actors and Agentlets: lifecycle, access, and audit, with the same rigor applied to human principals. Identity is the control plane for AI agents.

The Semantic Proxy Pattern is a three-layer architectural substrate that makes lifecycle governance enforceable at the network layer, not merely declarable at the policy layer. The three layers: semantic proxy, subnet isolation, and per-Actor cryptographic identity.

These three components are not alternatives. They compose: the lifecycle defines what governance requires; the proxy enforces it; non-human identity governance is the organizational commitment that makes both sustainable at scale.

What is the right unit of governance: agent, system, or Actor?

NIST’s AI Agent Standards Initiative, launched February 2026, identifies four technical focus areas in its associated concept paper: identification, authorization, access delegation, and audit logging.1 The CSA Agentic Trust Framework asks “who are you?” as its identity element.2 The IETF AIGA draft tiers governance by agent capability.3 All three treat the agent as a software artifact or a risk tier. None treats it as an organizational principal with accountability attached.

The right unit of governance is the Actor.

Attribit-ID’s Actor ontology establishes a three-class model: Human Actor, Application Actor, and AIgentic Actor. Agentlets are first-class principals within the AIgentic Actor class. They are spawned sub-agents, analogous to threads or daemons in a concurrent system. The governance question shifts from “what tier is this agent?” to “who is this Actor, who authorized it, and who owns it?” Those are institutional questions, not engineering ones.

The distinction becomes concrete at the moment of failure. An agent without an Actor identity belongs to no one. Its actions cannot be traced to a specific provisioning decision, a specific authorization grant, or a specific human owner. “The workflow did it” is not an audit trail. Governance organized around agents can describe what happened. Governance organized around Actors can establish who was responsible. In a regulated environment, the difference between those two answers is the difference between forensic accountability and an organizational shrug.

The Berkeley Haas Agentic Operating Model, published March 2026, frames the governance question correctly: “Rather than asking whether agents are capable, the model asks whether they are governable.”4 Attribit-ID’s answer requires Actors, not agents, as the unit of analysis. “Governable” is an institutional property. It attaches to principals, not processes.

Why can’t behavioral verification produce trust in non-deterministic actors?

Verification-based trust assumes the entity being verified has a stable, deterministic identity. A human logs in. A device authenticates. The entity presenting a credential today is the same entity provisioned yesterday. AIgentic Actors do not have that property.

An AIgentic Actor’s behavioral state at any moment is a function of its current context window. That window includes the system prompt, conversation history, tool outputs ingested, and any semantic content encountered during the task. The Actor provisioned at spawn holds the same credential as the Actor making a request three tool-calls later. Their operational states may be fundamentally different. A prompt-injected Actor presents a valid credential while executing attacker instructions. The credential is authentic. The behavior is not.

The CSA Agentic Trust Framework addresses this through its Behavior element, applying anomaly detection and intent analysis as the trust mechanism.2 Behavioral monitoring is necessary. It is not sufficient. Monitoring is after-the-fact and probabilistic. It detects deviation after the Actor has already acted. Probabilistic verification applied to non-deterministic actors produces confidence intervals. Confidence intervals are not an audit trail.

The architectural alternative is the Semantic Proxy Pattern.5 An AIgentic Actor operating inside a subnet with deny-all egress and mandatory proxy traversal cannot reach human-access infrastructure regardless of its credential state, behavioral profile, or model-layer compromise. The proxy is out-of-band, invisible to the primary Actor and unreachable by model-layer manipulation. A prompt-injected Actor cannot disable the proxy because it cannot perceive the proxy exists. The security control operates architecturally outside the attack surface of the entity being attacked.

Per-Actor cryptographic identity, SVIDs issued via SPIFFE/SPIRE,6 then produces attribution rather than safety. Safety is produced by the environment. Accountability is produced by identity. These are separate functions and must remain architecturally separate.

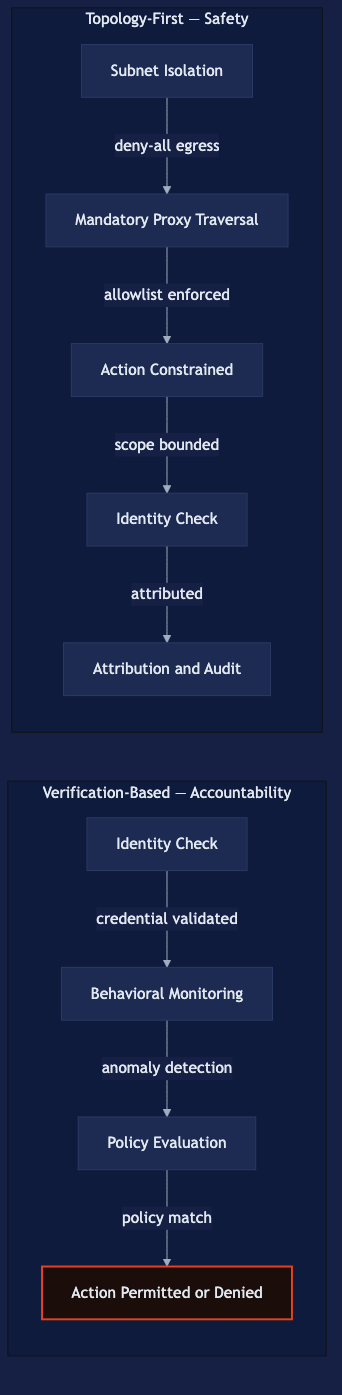

This is the precise distinction between Zero Trust and a topology-first architectural design pattern. Zero Trust is a verification posture: never trust, always verify. It makes the verification question more rigorous and continuous. A topology-first architectural design pattern makes the verification question structurally irrelevant to the safety outcome. Inside a topologically bounded environment, whether to trust an AIgentic Actor does not determine what it can reach. The topology determines that. Verification determines attribution and audit.

The four leading frameworks remain in the verification paradigm. CSA ATF adds behavioral monitoring.2 IETF AIGA adds tiered approval gates.3 NIST NCCoE addresses identification, authorization, access delegation, and audit for agents.7 CoSAI adds credential rotation and access reviews.8 Each makes verification more rigorous. None steps outside the verification paradigm. Subnet isolation is not a verification mechanism. It is an existence constraint: the Actor does not fail verification; it simply has nowhere to go.

What does governing AIgentic Actors mean as an operation?

The existing frameworks are taxonomic. They describe the governance problem with precision. They do not prescribe the governance act.

IETF AIGA proposes a Tiered Risk-Based Governance model and an Immutable Kernel Architecture.3 CSA ATF offers a maturity model where autonomy is earned, not granted by default.2 CoSAI’s April 2026 specification calls for purpose-specific entitlements, credential rotation, and access reviews.8 These are controls. Controls are expressions of governance decisions. They are not substitutes for the decision itself.

Governance is the Actor Identity Lifecycle executed as an organizational operation: provisioning, scoping, delegation, audit, and revocation. It applies to every AIgentic Actor in the environment, including Agentlets. The lifecycle answers questions that controls cannot. What authorized this Actor to exist? What scope was granted, and by whom? What Delegated Trust Chain does it participate in? When does its authorization expire, and who revokes it?

The scalability objection is legitimate and worth addressing directly. In production agentic deployments, Agentlets spawn and terminate in seconds. Per-instance lifecycle management is not tractable at that velocity. The resolution is lifecycle governance at the Actor class level, not the instance level. The Agentlet class is governed at definition: what types are permitted to exist, what scope they can inherit, what their maximum time-to-live is. Instances are governed by cryptographic enforcement of those class-level constraints: SVID time-to-live, scoped tokens, and subnet placement. The governance decision is made once, at class definition. Enforcement is automated at runtime. No lifecycle action is required per spawn.

The topology reinforces this directly. Agentlets born inside a subnet with deny-all egress operate within a class-level constraint that is enforced before any per-instance decision is required. The scalability problem is answered by automating enforcement of class-level governance decisions, not by abandoning per-Actor accountability.

CoSAI’s specification is the closest external parallel: it organizes around purpose-specific entitlements and access reviews.8 It frames these as access controls rather than as the Actor Identity Lifecycle as a discipline. The difference is operational. Controls applied without a lifecycle framework will be applied inconsistently, will not be revoked on schedule, and will accumulate as orphaned authorizations as the deployment grows. Non-human identity governance is not a one-time remediation project. It is a standing operational commitment, and the Actor Identity Lifecycle is what makes that commitment executable.

For more on the structural collapse of the application layer that makes Actor-level governance necessary in the first place, see The Identity Crisis at the Heart of AIgentic Systems.

What does the Identity Inheritance Model cost, and why is it the default?

The Identity Inheritance Model is not a bad implementation choice. It is the absence of a choice, made by default. When an AIgentic Actor runs under its orchestrating human principal’s identity, it inherits that principal’s permissions without any explicit provisioning decision. The model requires zero implementation work. That is why it is universal in every major orchestration platform. It carries compounding costs that become visible only after the population of ungoverned Actors reaches a scale the organization did not plan for.

Current data on that scale: only 23% of organizations have a formal, enterprise-wide strategy for agent identity management. Only 18% express high confidence that their current IAM can handle agent identities.9 Non-human identities already outnumber human identities at ratios from 45-to-1 to 144-to-1 in enterprise environments, with measurements increasing year-on-year.10 Gartner predicts that by 2028, at least 15% of day-to-day work decisions will be made autonomously by what the firm terms ‘agentic AI.’11 Organizations building on the inheritance model today are not establishing a baseline. They are building a migration problem.

The cost structure is specific. Inherited identity means no revocability without revoking the human principal’s access, no audit trail attributed to the Actor’s specific actions, and no scope boundary between the Actor’s authorization and the human’s. When a compromised Actor acts, the log shows the human’s identity. The blast radius is bounded by the human’s permissions, not the Actor’s task. In a Delegated Trust Chain across multiple Agentlet levels, that blast radius compounds at each level.

An ungoverned AIgentic Actor is not a misconfigured one. It is an Actor that governance has not yet reached. That framing matters because it defines where governance begins: not with a remediation pass over an existing population, but with the provisioning decision for the first Actor deployed. Organizations that defer that decision build retrofit costs that compound with every Actor added to the environment.

The governance argument is prospective, not retrospective. The inheritance model may be proportionate for today’s narrowly scoped deployments. It does not degrade gracefully as autonomy and scope increase. There is no natural checkpoint where inherited identity becomes inadequate and governance automatically engages. That transition must be designed. Organizations that do not design it will discover they needed it after something goes wrong.

The four leading frameworks (NIST NCCoE, CSA ATF, IETF AIGA, and CoSAI) are convergence signals. They confirm the problem is real, the industry is organizing around it, and standards are forming. NIST NCCoE’s concept paper completed its public comment period on April 2, 2026; no final guidance has been issued.7 IETF AIGA is an individual draft with no formal working group standing.3 CSA ATF has no operational certification program as of publication.2 CoSAI’s specification was approved by its Technical Steering Committee on March 20, 2026.8 None answers the practitioner’s question: given what is in production today, what does the security leader do first?

Three operational starting points follow from the framework this article presents. First, inventory your AIgentic Actors, specifically your Actors rather than just your agent deployments. Who owns each one? Who authorized it to exist, and with what scope? If those questions have no answers in a governance record, the Actor is operating under the Identity Inheritance Model by default. Second, decide where subnet isolation applies. The topology decision is made at deployment, not retrofitted into production. Each new AIgentic Actor deployment is an opportunity to build within a bounded environment; each deferred decision is a future migration item. Third, define one Actor class with full lifecycle governance (provisioning, scoping, delegation, audit, revocation) and use it as the template for all subsequent classes. Governance discipline is built through repetition of a correct process, not through taxonomic frameworks that describe the problem without prescribing the operation.

Identity is the control plane for AI agents. The Actor Identity Lifecycle is what operating that control plane looks like in practice.

Frequently asked questions

What is the difference between an AI agent and an AIgentic Actor?

An AI agent is a software artifact: a model, a process, an automated function. An AIgentic Actor is an organizational principal with accountability attached to it. The distinction determines whether governance is possible: agents can be described and monitored; Actors can be owned, authorized, audited, and revoked. Attribit-ID’s Actor ontology defines three classes: Human Actor, Application Actor, and AIgentic Actor, with Agentlets as a first-class sub-type of the AIgentic Actor class responsible for spawned, task-scoped operations.

Why isn’t Zero Trust sufficient for AIgentic environments?

Zero Trust is a verification posture: never trust, always verify. It assumes a stable, deterministic entity on the other end of the credential check. AIgentic Actors are non-deterministic. Their operational state shifts with their context window, making behavioral verification probabilistic rather than definitive. A topology-first architectural design pattern constrains an Actor’s action space at the network layer before any verification occurs, removing the dependency on probabilistic checks to produce safety. Zero Trust and topology-first governance are not alternatives. Topology-first makes the safety guarantee structural. Zero Trust makes the attribution record rigorous. The security leader needs both.

What is the Actor Identity Lifecycle?

The Actor Identity Lifecycle is the operational governance discipline for AIgentic Actors: provisioning (who authorized this Actor to exist and with what scope), scoping (what it is permitted to reach), delegation (what Delegated Trust Chain it participates in), audit (what record of its actions exists), and revocation (how authorization terminates without affecting the human principal). It is the non-human identity governance equivalent of the human identity lifecycle that IAM has managed for decades, applied at machine speed and Agentlet scale.

What is the Identity Inheritance Model and why is it a governance risk?

The Identity Inheritance Model is the default behavior in every major orchestration platform: an AIgentic Actor runs under its human principal’s identity and inherits that principal’s permissions without any explicit provisioning decision. It requires zero implementation work, which is why it is universal. The cost is specific: no Actor-specific revocability, no Actor-attributed audit trail, no scope boundary between the Actor’s authorization and the human’s. In a Delegated Trust Chain, the blast radius of a compromised Actor compounds at each level. It is not a bad choice. It is the absence of a choice, made automatically by every platform that has not implemented explicit Actor Identity Lifecycle governance.

What is the Semantic Proxy Pattern?

The Semantic Proxy Pattern is a three-layer reference architectural design pattern for AIgentic Actor authorization. Layer one is a mandatory semantic proxy that evaluates every Actor action against allowlist policy before it reaches the network. It operates out-of-band, invisible to the Actor, and fails closed by design. Layer two is subnet isolation that makes proxy traversal topologically mandatory, bounding blast radius at the hypervisor layer. Layer three is per-Actor cryptographic identity that enables instance-level attribution, role-specific policy, and granular revocation. Together, identity is the control plane for AI agents: topology produces safety; identity produces accountability. The two functions must remain architecturally separate to remain reliable.

Footnotes

-

NIST, “Announcing the AI Agent Standards Initiative for Interoperable and Secure AI Agents,” February 2026. https://www.nist.gov/news-events/news/2026/02/announcing-ai-agent-standards-initiative-interoperable-and-secure The four technical areas (identification, authorization, access delegation, audit logging) are elaborated in NIST’s associated AI Agent Identity and Authorization Concept Paper rather than in the launch announcement itself. ↩

-

Cloud Security Alliance, “The Agentic Trust Framework: Zero Trust Governance for AI Agents,” February 2026. https://cloudsecurityalliance.org/blog/2026/02/02/the-agentic-trust-framework-zero-trust-governance-for-ai-agents ↩ ↩2 ↩3 ↩4 ↩5

-

IETF, draft-aylward-aiga-2-00, “AI Governance and Accountability Protocol (AIGA),” Individual Internet-Draft submission. No formal IETF working group or standards-track standing. https://datatracker.ietf.org/doc/draft-aylward-aiga-2/00/ ↩ ↩2 ↩3 ↩4

-

Sandeep Saini, “Governing the Agentic Enterprise: A New Operating Model for Autonomous AI at Scale,” California Management Review, March 20, 2026. https://cmr.berkeley.edu/2026/03/governing-the-agentic-enterprise-a-new-operating-model-for-autonomous-ai-at-scale/ ↩

-

Charles Carrington, “The Semantic Proxy Pattern: A Defense-in-Depth Architecture for Enterprise AI Agent Authorization,” Attribit-ID, April 2026. https://attribit-id.com/writing/semantic-proxy-pattern ↩

-

SPIFFE (Secure Production Identity Framework for Everyone) and SPIRE (SPIFFE Runtime Environment) are CNCF graduated projects. SVIDs (SPIFFE Verifiable Identity Documents) are defined in the SPIFFE specification. https://spiffe.io ↩

-

NCCoE, “Accelerating the Adoption of Software and Artificial Intelligence Agent Identity and Authorization,” concept paper, February 2026. Public comment period closed April 2, 2026. https://www.nccoe.nist.gov/projects/software-and-ai-agent-identity-and-authorization ↩ ↩2

-

Coalition for Secure AI (CoSAI), “Agentic Identity and Access Management,” Technical Steering Committee approved March 20, 2026. https://www.coalitionforsecureai.org/wp-content/uploads/2026/04/agentic-identity-and-access-control.pdf ↩ ↩2 ↩3 ↩4

-

Strata Identity, “Securing Autonomous AI Agents,” Cloud Security Alliance, 2025. Survey of 285 IT and security professionals, conducted September–October 2025. Summary at https://www.strata.io/blog/agentic-identity/the-ai-agent-identity-crisis-new-research-reveals-a-governance-gap/ ↩

-

Cloud Security Alliance, “Securing Non-Human Identities in the Age of AI Agents,” RSAC 2025. https://cloudsecurityalliance.org/artifacts/securing-non-human-identities-in-the-age-of-ai-agents-rsac-2025 (45:1 lower bound). Entro Labs, “NHI & Secrets Risk Report H1 2025,” enterprise data collected January–June 2025. https://23579664.fs1.hubspotusercontent-na1.net/hubfs/23579664/Assets/EL-The-NHI-Secrets-Risk-Report-H1-2025.pdf (144:1 upper bound, up from 92:1 in H1 2024). ↩

-

Gartner, “Top Strategic Technology Trends 2025,” 2024. Widely cited in Gartner public communications; original report is client-access only. ↩